In a further sign of humanity’s inevitable journey towards dystopia, live trials of an autonomous sea-based killer robot made the news recently. If all goes well, it could be released into the wild within a couple of months.

Here’s a picture. Notice it’s cute little foldy-out arm at the bottom, which happens to contain the necessary ingredients to provide a lethal injection to its prey.

Luckily for us, this is the COTSbot, which, in a backwards version of nominative determinism, has a type of starfish called “Crown Of Thorns Starfish” as its sole target.

The issue with this type of starfish is that they have got a bit out of hand around the Great Barrier Reef. Apparently at a certain population level they live in happy synergy with the reef. But when the population increases to the size it is today (the cause of which is quite possibly due to human farming techniques) they start causing a lot of damage to the reef.

Hence the Australian Government wants rid of them. It’s a bit fiddly to have divers perform the necessary operation, so hence some Queensland University of Technology roboticists have developed a killer robot.

The notable feature of the COTSbot is that it may (??) be the first robot that autonomously decides whether it should kill a lifeform or not.

It drives itself around the reef for up to eight hours per session, using its computer vision and a plethora of processing and data science techniques to look for the correct starfishes, wherever they may be hiding, and perform a lethal injection into them. No human is needed to make the kill / don’t kill decision.

Want to see what it looks like in practice? Check out the heads-up-display:

If that looks kind of familiar to you, perhaps you’re remembering this?

Although that one is based on technology from the year 2029 and is part of a machine that looks more like this.

(Don’t panic, this one probably won’t be around for a good 13 years yet – well, bar the time-travel side of things.)

Back to present day: in fact, for the non-squeamish, you can watch a video of the COTS-destroyer in action below.

How does it work then?

A paper by Dayoub et al. presented at the IEEE/RSJ International Conference on Intelligent Robots and Systems conference explains the approach.

Firstly it should be noted that the challenge of recognising these starfish is considerable. The papers informs us that, whilst COTS look like starfish when laid out on flat terrain, they tend to wrap themselves around or hide in coral – so it’s not as simple as looking for nice star shapes. Furthermore they vary in colour, look different depending on how deep they are, and have thorns that can have the same sort of visual texture as the coral they live in (go evolution). The researchers therefore attempt to assess the features of the COTS via various clever techniques detailed in the paper.

Once the features have been extracted, a random forest classifier, which has been trained on thousands of known photos of starfish/no starfish is used to determine whether what it can see through its camera should be exterminated or not.

A random forest classifier is a popular data science classification technique, essentially being an aggregation of decision trees.

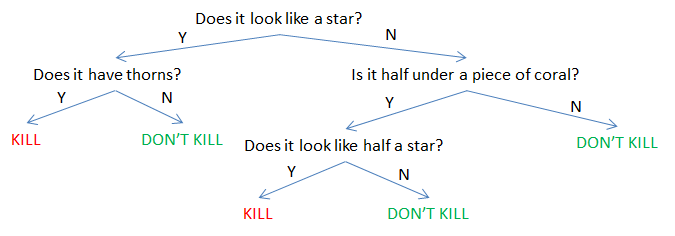

Decision trees are one of the more understandable-by-humans classification techniques. Simplistically you could imagine a single tree as providing branches to follow dependent on certain variables, which it automatically machine-learns from have previously processed a stack of inputs that it has been told are either one thing (a starfish) or another thing (not a starfish).

Behind the scenes, an overly simple version of a tree (with slight overtones of doomsday added for dramatic effect) might have a form similar to this:

The random forest classifier takes a new image and runs many different decision trees over it – each tree has been trained independently and hence is likely to have established different rules, and potentially therefore make different decisions. The “forest” then looks at the decision from each of its trees, and, in a fit of machine-learning democracy, takes the most popular decision as the final outcome.

The researchers claim to have approached 99.9% accuracy in this detection – to the point where it will even refuse to go after 3D-printed COTS, preferring the product that nature provides.

Although probably not the type of killer robot that the Campaign to Stop Killer Robots campaigns against, or the UN debates the implications of; if it is the first autonomous killer robot it still can conjure up the beginnings of some ethical dilemmas (even outside that of killing the starfish…after all, deliberate eradication/introduction of species to prevent other problems has not always gone well even in the pre-robotic stage of history – but one assumes this has been considered in depth before we got to this point!).

Although 99.9% accuracy is highly impressive, it’s not 100%. It’s very unlikely that many of non-trivial classification models can ever truly claim 100% over the vast amount of complex scenarios that the real world presents. Data-based classifications, predictions and so on are almost always a compromise between the concepts like precision vs recall, sensitivity vs specificity, type 1 vs type 2 errors, accuracy vs power and whatever other names no doubt exist to refer to the general concept that a decision model may:

- Identify something that is not a COTS as a COTS (and try to kill it)

- Identify a real COTS as not being a COTS (and leaving it alone to plunder the reef)

Deciding on the accpetable balance between accepting each type of error is an important part of designing models. Without actually knowing the details, here it sounds like the researchers sensibly opted onto the side of caution, such that if the robot isn’t very sure it will send a photo to a human and await a decision.

It’s also the case that the intention is not to have the robot kill every single COTS, which suggests that false negatives might be less damaging than false positives. One should also note that it’s not going to be connected to the internet, making it hard for the average hacker to remotely take it over and go on a tourist-injection mission or similar.

However, given it’s envisaged that one day a fleet of 100 COTSbots, each armed with 200 lethal shots, might crawl the reef for 8 hours per session it’s very possible a wrong decision may be made at some point.

Happily, it’s unlikely to accidentally classify a human as a starfish and inject it with poison (plus, although I’m too lazy to look it up, I imagine that a starfish dose of starfish poison is not enough to kill a human) – the risk the researchers see is more that the injection needle may be damaged if the COTSbot tries to inject a bit of coral.

Nonetheless, a precedent may have been set for a fleet of autonomous killer robot drones. If it works out well, perhaps it starts moving the needle slightly towards the world of handily-acronymed “Lethal Autonomous Weapons Systems” that the US Defense Advanced Research Projects Agency is supposedly working on today.

If that fills you with unpleasant stress, there’s no need to worry for the moment. Take a moment of light relief and watch this video of how good the 2015 entrants to the DARPA robotics challenge were at stumbling back from the local student bar traversing human terrain.